您的位置:上海毫米网络优化公司 > 网站优化分享 >

相关推荐recommended

- 5.118 BCC工具之xfsslower.py解读

- 【Entity Framework】EF配置之代码配置详解

- mongodb中的多表查询aggregate中排序不是按全表排序,而是

- Day17-正则表达式

- -bash: hadoop: 未找到命令

- 华为ensp中链路聚合两种(lacp-static)模式配置方法

- 【python】flask各种版本的项目,终端命令运行方式的实现

- Rust面试宝典第4题:打家劫舍

- Spring之 国际化:i18n

- mysql 事务详解一

- 【vue加载16秒优化到2秒】Vue3加载慢的性能优化,打包后页面静态

- 云计算基础、Issa、Pssa、Saas区别

- Mac 版 IDEA 中配置 GitLab

- 适合初学者的简单正则表达式技巧

- Vue3+SpringBoot实现文件上传详细教程

- Springcloud智慧工地APP云综合平台源码 SaaS服务

- 五种方案图文并茂教你使用DBeaver,SQL文件导入数据库,插入数据

- 7-6 学生选课信息管理 分数 10

- 虚幻引擎架构自动化及蓝图编辑器高级开发进修班

- SpringBoot的 ResponseEntity类讲解(具体讲解返

- 【机器人小车】自己动手用ESP32手搓一个智能机器人:ESP32-CA

- 4、jvm-垃圾收集算法与垃圾收集器

- Nginx(11)-缓存详细配置及缓存多种用法

- 贪心算法(又叫贪婪算法)Greedy Algorithm

- Spring Boot 启动报错解决:No active profil

- uniapp+springboot 实现前后端分离的个人备忘录系统【超

- 【Jenkins PipeLine】Jenkins PipeLine

- 基于JSP+Mysql+HTml+Css仓库出入库管理系统设计与实现

- 求组合数的三种算法

- 如何下载IDEA2023.3.4 最新激活破解教程

爬虫练习---动态数据の小红书评论爬取

作者:mmseoamin日期:2024-04-01

目录

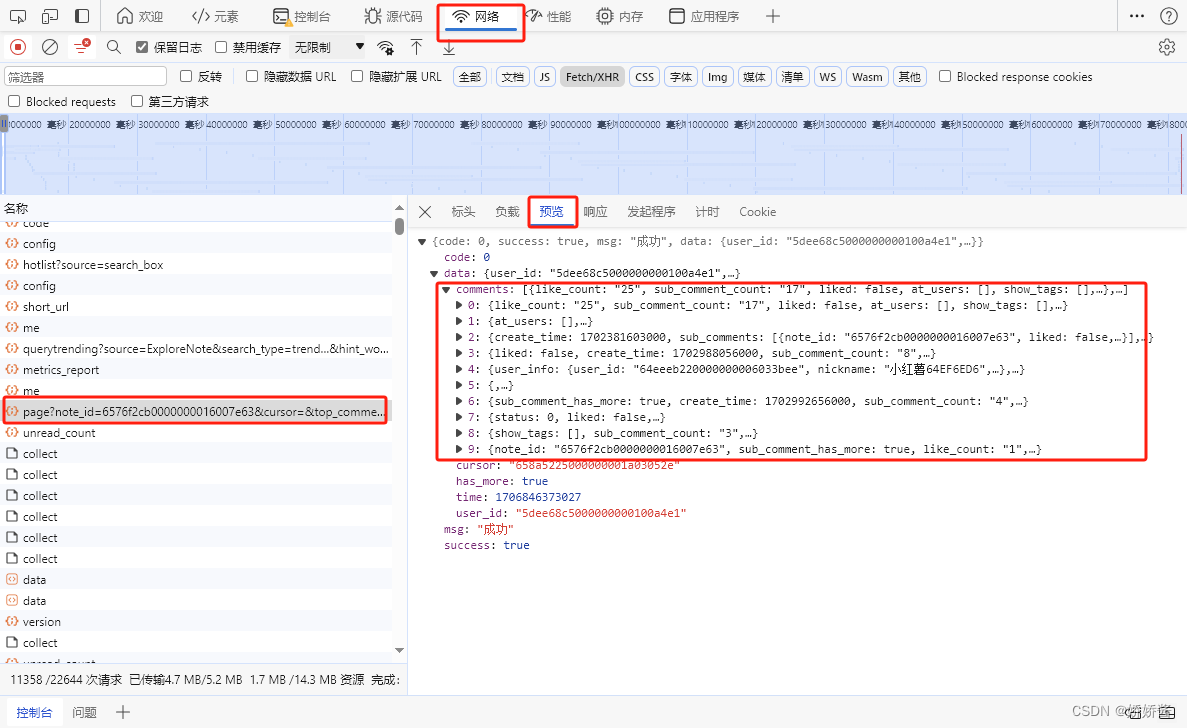

1.在笔记中打开检查,可以在“预览”中找到小红书的评论内容

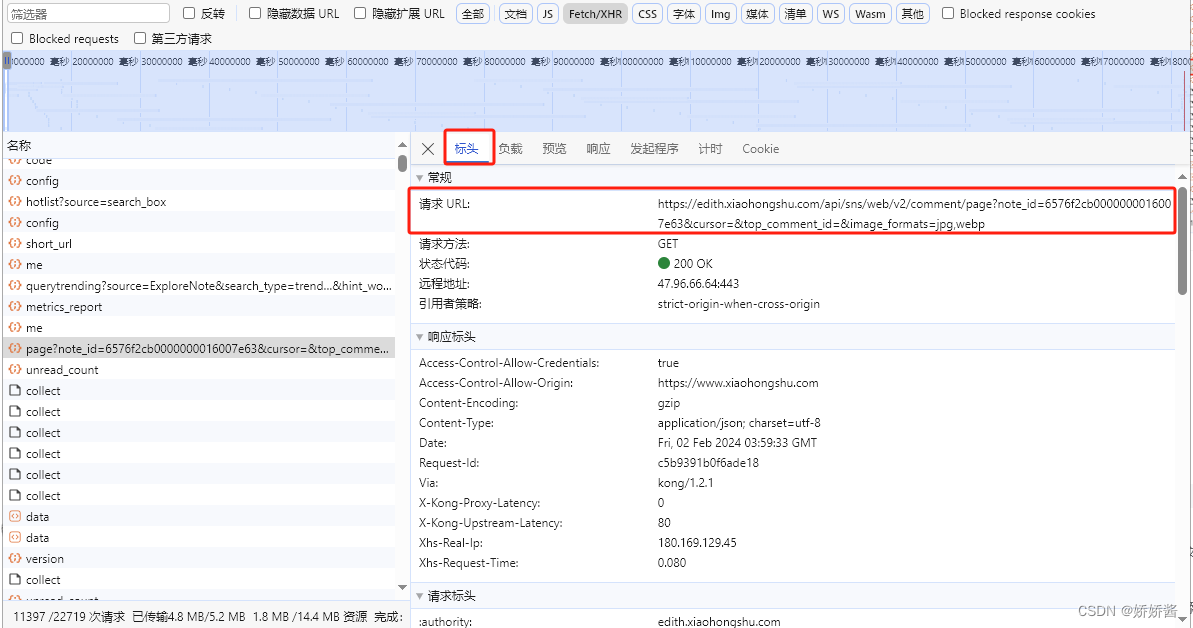

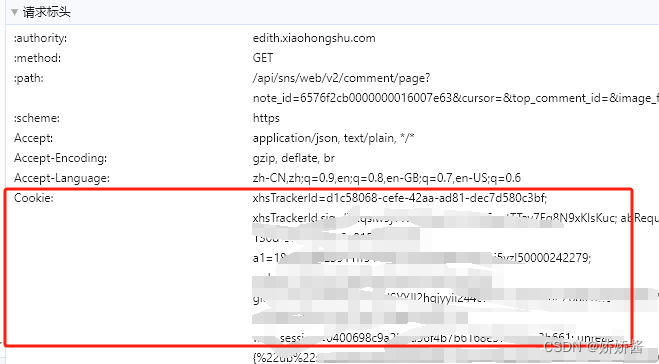

2.找到想要的请求后,在“标头”里找到你需要的URL、Cookie、User-Agent

二、写代码

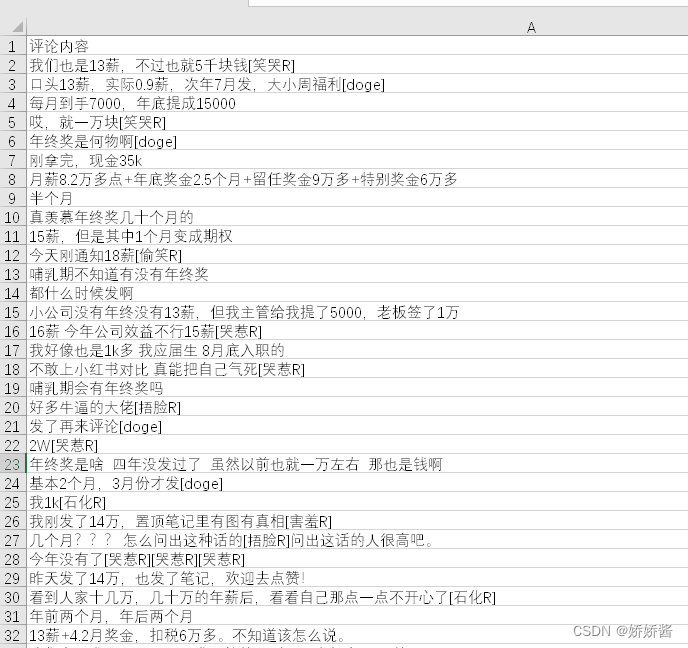

三、爬取结果

一、找到你想要爬取的内容

1.在笔记中打开检查,可以在“预览”中找到小红书的评论内容

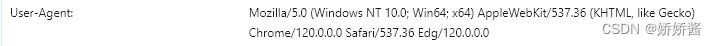

2.找到想要的请求后,在“标头”里找到你需要的URL、Cookie、User-Agent

二、写代码

import requests

from time import sleep

import csv

import random

def main(page, file, cursor):

url = f'https://edith.xiaohongshu.com/api/sns/web/v2/comment/page?note_id=6576f2cb0000000016007e63&cursor={cursor}&top_comment_id=&image_formats=jpg,webp'

headers = {

'Cookie':'*********', #用自己的Cookie,需要是登录后的Cookie

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/120.0.0.0 Safari/537.36 Edg/120.0.0.0',

}

try:

csvwriter.writerow(('评论内容',))

while page < 7: #爬取7页的评论

if cursor != '' : #评论第一页的url中的cursor是空,请求后返回的数据里会有第二页的cursor,做个循环更新url中的cursor,这样就可以实现翻页了。

url = f'https://edith.xiaohongshu.com/api/sns/web/v2/comment/page?note_id=6576f2cb0000000016007e63&cursor={cursor}&top_comment_id=&image_formats=jpg,webp'

resp = requests.get(url, headers=headers)

data = resp.json()

cursor = data['data']['cursor']

page += 1

for i in data['data']['comments'] :

print('爬取内容:', i['content'])

try:

csvwriter.writerow((i['content'],)) #参数后面要带个逗号,不带逗号在csv中是一格一个字

except:

continue #有的评论中有无法写入的表情包会报错,用try+except把这些评论过滤掉

sleep(3 + random.random())

except:

print("当前网页爬取失败")

return

if __name__ == '__main__' :

cursor=''

with open('red_comment.csv', 'a', newline='', encoding='gbk') as file:

csvwriter = csv.writer(file)

main(0,file, cursor)

sleep(3 + random.random())

三、爬取结果