- 微服务中台架构的设计与实现

- MySQL 自增列解析(Auto

- 1.5MHz,1.2A COT 架构同步降压变换器只要0.16元,型号

- MySQL中的SQL高级语句[一](上篇)

- 基于springboot大学生兼职平台管理系统(完整源码+数据库)

- Nginx服务的主配置文件 nginx.conf

- SQL Server 数据表模糊查询(like 用法)以及查询函数

- 彻底讲透:高并发场景下,MySQL处理并发修改同一行数据的安全方法

- 【Java EE】关于Spring MVC 响应

- Rust 基本环境安装

- 从数据中台到上层应用全景架构示例

- Mysql 报 java.sql.SQLException:null,

- Go-Zero微服务快速入门和最佳实践(一)

- 基于知识图谱的大学生就业能力评价和职位推荐系统——超详细要点总结(创作

- Spring Boot集成knife4j

- spring boot自动配置原理,简单易懂

- SpringBoot 静态资源映射

- SQL-窗口函数

- 【linux】软件工具安装 + vim 和 gcc 使用(上)

- Java项目实战--基于SpringBoot3.0开发仿12306高并

- Java实战:Spring Boot集成Swagger3

- 二刷大数据(一)- Hadoop

- 前端Vue日常工作中--Watch数据监听

- 基于JSP+Mysql+HTml+Css仓库出入库管理系统设计与实现

- Spring Boot启动时执行初始化操作的几种方式

- 探索Spring AI:将人工智能与软件开发无缝融合

- Python进阶(一)(MySQL,Navicat16免费安装)

- 最新免费 ChatGPT、GPTs、AI换脸(Suno-AI音乐生成大

- springBoot如何动态切换数据源

- Rustdesk开源远程连接-自搭建服务器

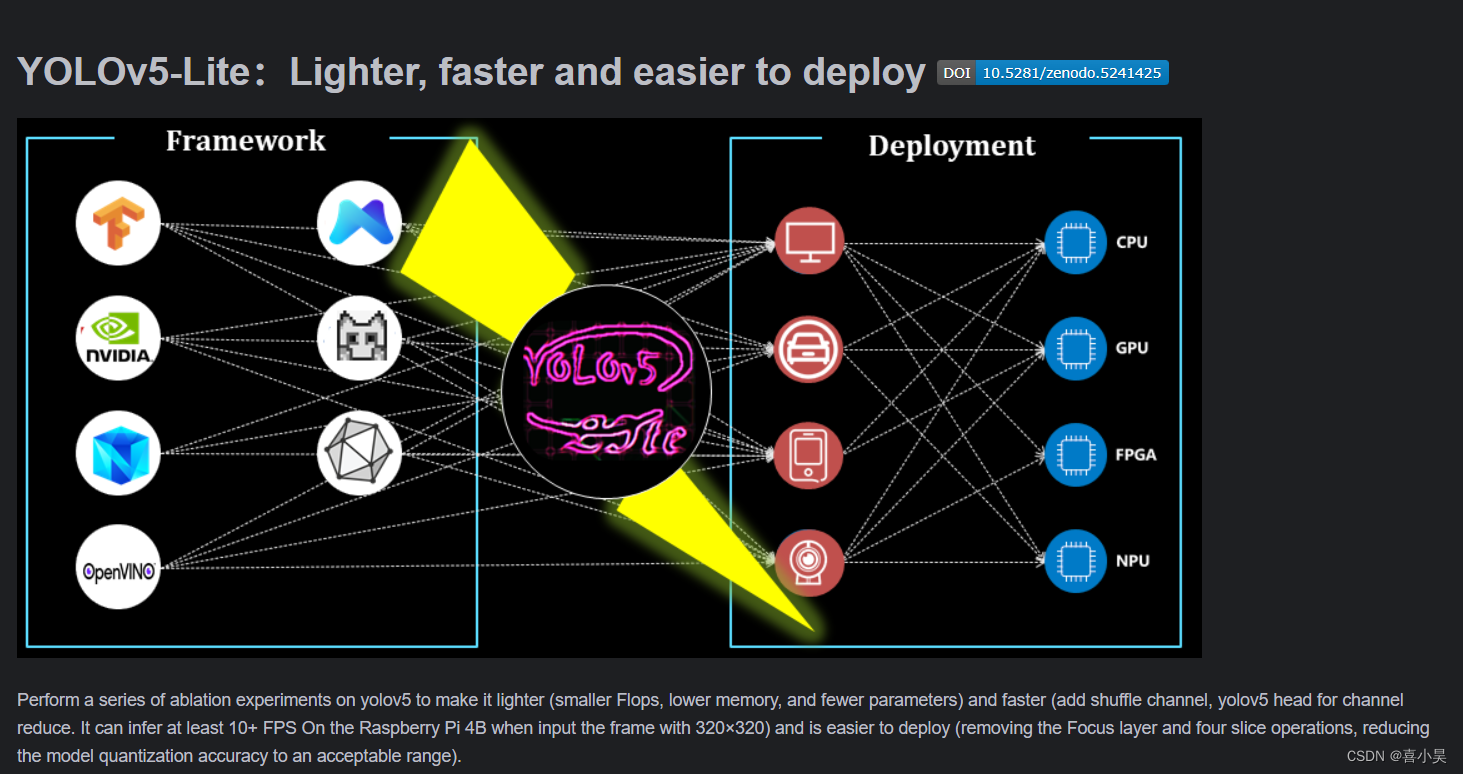

yolov5-Lite介绍

这里项目链接查看,或者这里下载。

经过本人测试,与yolov5-7.0相比,训练好的权重文件大小大约是yolov5-7.0的0.3倍(yolov5-Lite——3.4M,yolov5-7.0——13M),置信度均在0.9之上。特别的,我之所以使用此Lite改进算法,是因为需要部署在智能小车上实现图像识别的功能,而小车上只有CPU,yolov5-7.0使用CPU计算的速度太慢了,一秒只能处理3张图像,距离功能的要求还差些,而Lite算法的权重参数减少了很多,速度也相应快了一些,部署在小车上,使用CPU计算的速度快了0.8倍,不算很多,但也算是勉强能使用了,每秒5/6张图片。

需求

算法自带检测图片、视频的detect.py脚本,但是拿来自己灵活的使用还是有许多问题,一般图像检测都是对实时性有要求,detect.py脚本是检测本地的图片视频。我修改一部分代码,将detect.py脚本写成一个api,直接调用函数,传入一个img数组对象,即可输出detections字典,包含各检测对象的类别、位置信息、置信度。

修改代码

原函数

def detect(save_img=False):

source, weights, view_img, save_txt, imgsz = opt.source, opt.weights, opt.view_img, opt.save_txt, opt.img_size

save_img = not opt.nosave and not source.endswith('.txt') # save inference images

webcam = source.isnumeric() or source.endswith('.txt') or source.lower().startswith(

('rtsp://', 'rtmp://', 'http://', 'https://'))

# Directories

save_dir = Path(increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok)) # increment run

(save_dir / 'labels' if save_txt else save_dir).mkdir(parents=True, exist_ok=True) # make dir

# Initialize

set_logging()

device = select_device(opt.device)

half = device.type != 'cpu' # half precision only supported on CUDA

# Load model

model = attempt_load(weights, map_location=device) # load FP32 model

stride = int(model.stride.max()) # model stride

imgsz = check_img_size(imgsz, s=stride) # check img_size

if half:

model.half() # to FP16

# Second-stage classifier

classify = False

if classify:

modelc = load_classifier(name='resnet101', n=2) # initialize

modelc.load_state_dict(torch.load('weights/resnet101.pt', map_location=device)['model']).to(device).eval()

# Set Dataloader

vid_path, vid_writer = None, None

if webcam:

view_img = check_imshow()

cudnn.benchmark = True # set True to speed up constant image size inference

dataset = LoadStreams(source, img_size=imgsz, stride=stride)

else:

dataset = LoadImages(source, img_size=imgsz, stride=stride)

# Get names and colors

names = model.module.names if hasattr(model, 'module') else model.names

colors = [[random.randint(0, 255) for _ in range(3)] for _ in names]

# Run inference

if device.type != 'cpu':

model(torch.zeros(1, 3, imgsz, imgsz).to(device).type_as(next(model.parameters()))) # run once

t0 = time.time()

for path, img, im0s, vid_cap in dataset:

img = torch.from_numpy(img).to(device)

img = img.half() if half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

t1 = time_synchronized()

pred = model(img, augment=opt.augment)[0]

# Apply NMS

pred = non_max_suppression(pred, opt.conf_thres, opt.iou_thres, classes=opt.classes, agnostic=opt.agnostic_nms)

t2 = time_synchronized()

# Apply Classifier

if classify:

pred = apply_classifier(pred, modelc, img, im0s)

# Process detections

for i, det in enumerate(pred): # detections per image

if webcam: # batch_size >= 1

p, s, im0, frame = path[i], '%g: ' % i, im0s[i].copy(), dataset.count

else:

p, s, im0, frame = path, '', im0s, getattr(dataset, 'frame', 0)

p = Path(p) # to Path

save_path = str(save_dir / p.name) # img.jpg

txt_path = str(save_dir / 'labels' / p.stem) + ('' if dataset.mode == 'image' else f'_{frame}') # img.txt

s += '%gx%g ' % img.shape[2:] # print string

gn = torch.tensor(im0.shape)[[1, 0, 1, 0]] # normalization gain whwh

if len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(img.shape[2:], det[:, :4], im0.shape).round()

# Print results

for c in det[:, -1].unique():

n = (det[:, -1] == c).sum() # detections per class

s += f"{n} {names[int(c)]}{'s' * (n > 1)}, " # add to string

# Write results

for *xyxy, conf, cls in reversed(det):

if save_txt: # Write to file

xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4)) / gn).view(-1).tolist() # normalized xywh

line = (cls, *xywh, conf) if opt.save_conf else (cls, *xywh) # label format

with open(txt_path + '.txt', 'a') as f:

f.write(('%g ' * len(line)).rstrip() % line + '\n')

if save_img or view_img: # Add bbox to image

label = f'{names[int(cls)]} {conf:.2f}'

plot_one_box(xyxy, im0, label=label, color=colors[int(cls)], line_thickness=3)

# Print time (inference + NMS)

print(f'{s}Done. ({t2 - t1:.3f}s)')

# Stream results

if view_img:

cv2.imshow(str(p), im0)

cv2.waitKey(1) # 1 millisecond

# Save results (image with detections)

if save_img:

if dataset.mode == 'image':

cv2.imwrite(save_path, im0)

else: # 'video' or 'stream'

if vid_path != save_path: # new video

vid_path = save_path

if isinstance(vid_writer, cv2.VideoWriter):

vid_writer.release() # release previous video writer

if vid_cap: # video

fps = vid_cap.get(cv2.CAP_PROP_FPS)

w = int(vid_cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(vid_cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

else: # stream

fps, w, h = 30, im0.shape[1], im0.shape[0]

save_path += '.mp4'

vid_writer = cv2.VideoWriter(save_path, cv2.VideoWriter_fourcc(*'mp4v'), fps, (w, h))

vid_writer.write(im0)

if save_txt or save_img:

s = f"\n{len(list(save_dir.glob('labels/*.txt')))} labels saved to {save_dir / 'labels'}" if save_txt else ''

print(f"Results saved to {save_dir}{s}")

print(f'Done. ({time.time() - t0:.3f}s)')

修改后的函数

class DETECT_API:

def __init__(self,opt):

weights,imgsz = opt.weights,opt.img_size

self.device = select_device(opt.device)

# device = device_ if torch.cuda.is_available() else 'cpu' # 设置代码执行的设备 cuda device, i.e. 0 or 0,1,2,3 or cpu

# self.device = device

self.half = self.device.type != 'cpu' # half precision only supported on CUDA

# self.imgsz = (imgsz, imgsz) # 输入图片的大小 默认640(pixels)

self.conf_thres = opt.conf_thres # object置信度阈值 默认0.25 用在nms中

self.iou_thres = opt.iou_thres # 做nms的iou阈值 默认0.45 用在nms中

# self.max_det = max_det # 每张图片最多的目标数量 用在nms中

self.classes = opt.classes # 在nms中是否是只保留某些特定的类 默认是None 就是所有类只要满足条件都可以保留 --class 0, or --class 0 2 3

self.agnostic_nms = opt.agnostic_nms # 进行nms是否也除去不同类别之间的框 默认False

self.augment = opt.augment # 预测是否也要采用数据增强 TTA 默认False

# self.visualize = False # 特征图可视化 默认FALSE

# self.half = False # 是否使用半精度 Float16 推理 可以缩短推理时间 但是默认是False

# self.dnn = False # 使用OpenCV DNN进行ONNX推理

# Load model

self.model = attempt_load(weights, map_location=self.device) # load FP32 model

if self.half:

self.model.half() # to FP16

if self.device.type != 'cpu':

self.model(torch.zeros(1, 3, imgsz, imgsz).to(self.device).type_as(next(self.model.parameters()))) # run once

self.stride = int(self.model.stride.max()) # model stride

self.imgsz = check_img_size(imgsz, s=self.stride) # check img_size

# Get names and colors

self.names = self.model.module.names if hasattr(self.model, 'module') else self.model.names

colors = [[random.randint(0, 255) for _ in range(3)] for _ in self.names]

def detect2(self,img):

'''

检测图像,输入图片数组

Args:

img: 图片数组

Returns:字典{'class': cls, 'conf': conf, 'position': xywh}

'''

# Set Dataloader

# dataset = LoadImages(img_path, img_size=self.imgsz, stride=self.stride)

# 用于存放结果

detections = []

s = ''

if True:

# print(path)

im0 = img*1

# Padded resize

img = letterbox(im0, self.imgsz, stride=self.stride)[0]

# Convert

img = img[:, :, ::-1].transpose(2, 0, 1) # BGR to RGB, to 3x416x416

img = ascontiguousarray(img) # np.ascontiguousarray(img)

img = torch.from_numpy(img).to(self.device)

img = img.half() if self.half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

t1 = time_synchronized()

pred = self.model(img, augment=self.augment)[0]

# Apply NMS

pred = non_max_suppression(pred, self.conf_thres, self.iou_thres, classes=self.classes, agnostic=self.agnostic_nms)

t2 = time_synchronized()

# Process detections

for i, det in enumerate(pred): # detections per image

s = '%gx%g ' % img.shape[2:] # print string

if len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(img.shape[2:], det[:, :4], im0.shape).round()

# Write results

for *xyxy, conf, cls in reversed(det):

xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4))).view(-1).tolist()

xywh = [round(x) for x in xywh]

xywh = [xywh[0] - xywh[2] // 2, xywh[1] - xywh[3] // 2, xywh[2],

xywh[3]] # 检测到目标位置,格式:(left,top,w,h)

cls = self.names[int(cls)]

conf = float(conf)

detections.append({'class': cls, 'conf': conf, 'position': xywh})

# 输出结果

for i in detections:

print(i)

# Print time (inference + NMS)

print(f'{s}Done. ({t2 - t1:.3f}s)')

return detections

将代码封装为一个类,先载入模型,之后就可以传入图像进行图像检测了。

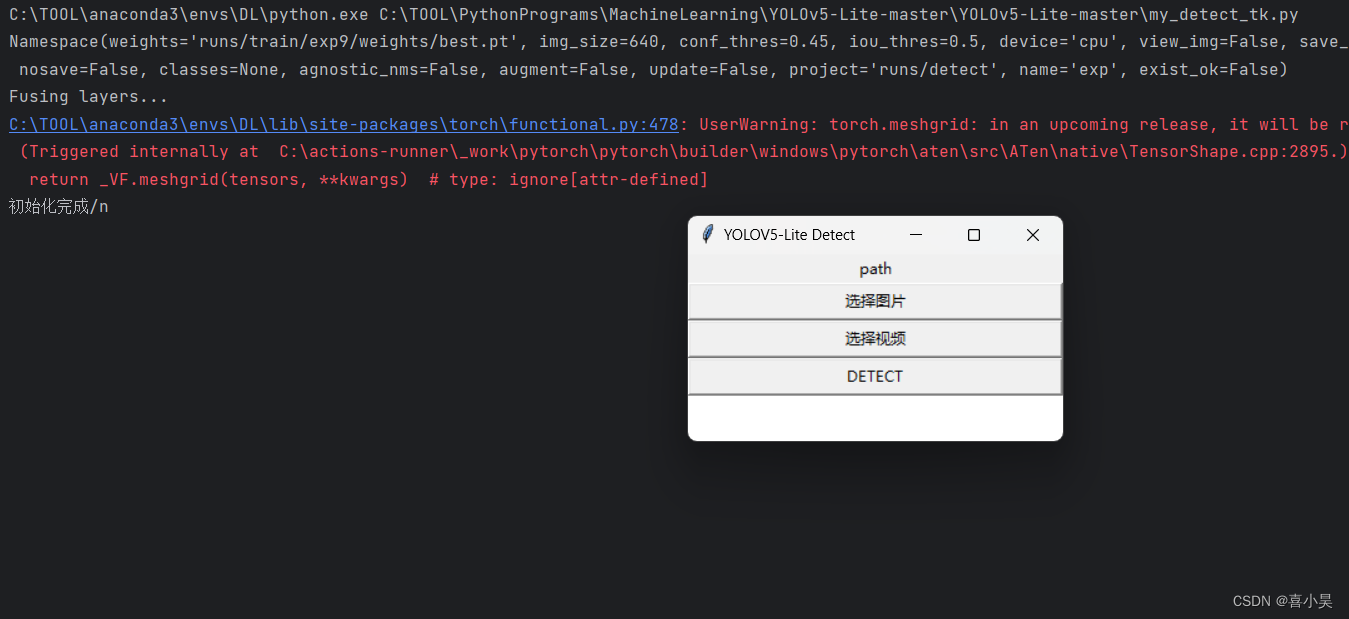

未来更好测试,写了各简单的GUI。使用python自带的tkinter库实现。

# 界面设计

detect_state = False

top = tk.Tk()

top.title('YOLOV5-Lite Detect')

top['bg'] = 'white'

width = 300

height = 150

win_width = top.winfo_screenwidth()

win_height = top.winfo_screenheight()

center_place = str(int(win_width/2 - width/2))+'+'+str(int(win_height/2 - height/2))

top.geometry(str(width)+'x'+str(height)+'+'+center_place)

label = tk.Label(top,text='path')

label.pack(fill='both')

btn_img = tk.Button(top,text='选择图片',command=select_img)

btn_img.pack(fill='both')

btn_video = tk.Button(top,text='选择视频',command=select_video)

btn_video.pack(fill='both')

btn_detect = tk.Button(top,text='DETECT',command=mt_detect)

btn_detect.pack(fill='both')

top.mainloop()

完整代码(调用接口脚本)

import tkinter as tk

from tkinter import filedialog#用于打开文件 核心:filepath = filedialog.askopenfilename() #获得选择好的文件,单个文件

import argparse

import time

from pathlib import Path

import cv2

import torch

import torch.backends.cudnn as cudnn

from numpy import random, ascontiguousarray

from models.experimental import attempt_load

from utils.datasets import LoadStreams, LoadImages, letterbox

from utils.general import check_img_size, check_requirements, check_imshow, non_max_suppression, apply_classifier, \

scale_coords, xyxy2xywh, strip_optimizer, set_logging, increment_path

from utils.plots import plot_one_box

from utils.torch_utils import select_device, load_classifier, time_synchronized

class DETECT_API:

def __init__(self,opt):

weights,imgsz = opt.weights,opt.img_size

self.device = select_device(opt.device)

# device = device_ if torch.cuda.is_available() else 'cpu' # 设置代码执行的设备 cuda device, i.e. 0 or 0,1,2,3 or cpu

# self.device = device

self.half = self.device.type != 'cpu' # half precision only supported on CUDA

# self.imgsz = (imgsz, imgsz) # 输入图片的大小 默认640(pixels)

self.conf_thres = opt.conf_thres # object置信度阈值 默认0.25 用在nms中

self.iou_thres = opt.iou_thres # 做nms的iou阈值 默认0.45 用在nms中

# self.max_det = max_det # 每张图片最多的目标数量 用在nms中

self.classes = opt.classes # 在nms中是否是只保留某些特定的类 默认是None 就是所有类只要满足条件都可以保留 --class 0, or --class 0 2 3

self.agnostic_nms = opt.agnostic_nms # 进行nms是否也除去不同类别之间的框 默认False

self.augment = opt.augment # 预测是否也要采用数据增强 TTA 默认False

# self.visualize = False # 特征图可视化 默认FALSE

# self.half = False # 是否使用半精度 Float16 推理 可以缩短推理时间 但是默认是False

# self.dnn = False # 使用OpenCV DNN进行ONNX推理

# Load model

self.model = attempt_load(weights, map_location=self.device) # load FP32 model

if self.half:

self.model.half() # to FP16

if self.device.type != 'cpu':

self.model(torch.zeros(1, 3, imgsz, imgsz).to(self.device).type_as(next(self.model.parameters()))) # run once

self.stride = int(self.model.stride.max()) # model stride

self.imgsz = check_img_size(imgsz, s=self.stride) # check img_size

# Get names and colors

self.names = self.model.module.names if hasattr(self.model, 'module') else self.model.names

colors = [[random.randint(0, 255) for _ in range(3)] for _ in self.names]

def detect(self,img_path):

'''

检测图像,输入图片路径,不能输入视频路径

Args:

img_path: 图片路径

Returns:字典{'class': cls, 'conf': conf, 'position': xywh}

'''

# Set Dataloader

dataset = LoadImages(img_path, img_size=self.imgsz, stride=self.stride)

# 用于存放结果

detections = []

s = ''

for path, img, im0s, vid_cap in dataset:

print(path)

img = torch.from_numpy(img).to(self.device)

img = img.half() if self.half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

t1 = time_synchronized()

pred = self.model(img, augment=self.augment)[0]

# Apply NMS

pred = non_max_suppression(pred, self.conf_thres, self.iou_thres, classes=self.classes, agnostic=self.agnostic_nms)

t2 = time_synchronized()

# Process detections

for i, det in enumerate(pred): # detections per image

s = '%gx%g ' % img.shape[2:] # print string

im0 = im0s

if len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(img.shape[2:], det[:, :4], im0.shape).round()

# Write results

for *xyxy, conf, cls in reversed(det):

xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4))).view(-1).tolist()

xywh = [round(x) for x in xywh]

xywh = [xywh[0] - xywh[2] // 2, xywh[1] - xywh[3] // 2, xywh[2],

xywh[3]] # 检测到目标位置,格式:(left,top,w,h)

cls = self.names[int(cls)]

conf = float(conf)

detections.append({'class': cls, 'conf': conf, 'position': xywh})

# 输出结果

for i in detections:

print(i)

# Print time (inference + NMS)

print(f'{s}Done. ({t2 - t1:.3f}s)')

return detections

def detect2(self,img):

'''

检测图像,输入图片数组

Args:

img: 图片数组

Returns:字典{'class': cls, 'conf': conf, 'position': xywh}

'''

# Set Dataloader

# dataset = LoadImages(img_path, img_size=self.imgsz, stride=self.stride)

# 用于存放结果

detections = []

s = ''

if True:

# print(path)

im0 = img*1

# Padded resize

img = letterbox(im0, self.imgsz, stride=self.stride)[0]

# Convert

img = img[:, :, ::-1].transpose(2, 0, 1) # BGR to RGB, to 3x416x416

img = ascontiguousarray(img) # np.ascontiguousarray(img)

img = torch.from_numpy(img).to(self.device)

img = img.half() if self.half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

t1 = time_synchronized()

pred = self.model(img, augment=self.augment)[0]

# Apply NMS

pred = non_max_suppression(pred, self.conf_thres, self.iou_thres, classes=self.classes, agnostic=self.agnostic_nms)

t2 = time_synchronized()

# Process detections

for i, det in enumerate(pred): # detections per image

s = '%gx%g ' % img.shape[2:] # print string

if len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(img.shape[2:], det[:, :4], im0.shape).round()

# Write results

for *xyxy, conf, cls in reversed(det):

xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4))).view(-1).tolist()

xywh = [round(x) for x in xywh]

xywh = [xywh[0] - xywh[2] // 2, xywh[1] - xywh[3] // 2, xywh[2],

xywh[3]] # 检测到目标位置,格式:(left,top,w,h)

cls = self.names[int(cls)]

conf = float(conf)

detections.append({'class': cls, 'conf': conf, 'position': xywh})

# 输出结果

for i in detections:

print(i)

# Print time (inference + NMS)

print(f'{s}Done. ({t2 - t1:.3f}s)')

return detections

def select_img():

global detect_state

pass

filepath = filedialog.askopenfilename(title='选择图片',filetypes=[('图片', '*.jpg *.png'), ('All files', '*')])

label['text'] = filepath

detect_state = 1

def select_video():

global detect_state

pass

filepath = filedialog.askopenfilename(title='选择视频', filetypes=[('视频', '*.mp4'), ('All files', '*')])

label['text'] = filepath

detect_state = 2

def mt_detect():

global detect_state

pass

path = label['text']

print(path)

if not detect_state:

print('请选择图片或视频')

else:

# opt.source = path

if detect_state == 1:

show_img(path)

elif detect_state == 2:

show_video(path)

detect_state = 0

def show_img(img_path):

# # 传入图片路径

# detections = Detect.detect(img_path)

# print(detections)

img = cv2.imread(img_path)

t1 = time.time()

detections = Detect.detect2(img)

t2 = time.time()

for i in detections:

# print(i)

x, y, w, h = i['position']

img = cv2.rectangle(img, (x, y), (x + w, y + h), (0, 0, 255), 3)

img = cv2.putText(img, "{} {}".format(i['class'], round(i['conf'], 4)), (x, y - 5),

cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 0, 255), 1,

cv2.LINE_AA)

img = cv2.putText(img, "{}s".format( round((t2 - t1), 3)),

(10, 50), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0), 1, cv2.LINE_AA)

cv2.imshow('yolov5-Lite img', img)

cv2.waitKey(0)

cv2.destroyAllWindows()

def show_video(video_path):

cap = cv2.VideoCapture(video_path)

while cap.isOpened():

ret, img = cap.read()

if ret:

pass

t1 = time.time()

detections = Detect.detect2(img)

t2 = time.time()

for i in detections:

# print(i)

x, y, w, h = i['position']

img = cv2.rectangle(img, (x, y), (x + w, y + h), (0, 0, 255), 3)

img = cv2.putText(img, "{} {}".format(i['class'], round(i['conf'], 4)), (x, y - 5), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 0, 255), 1, cv2.LINE_AA)

img = cv2.putText(img, "{}FPS - {}s".format(round(1/(t2-t1),2), round((t2-t1),3)), (10, 50), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0), 1,cv2.LINE_AA)

cv2.imshow('yolov5-Lite img', img)

# cv2.waitKey(1000)

if cv2.waitKey(10) == ord('q'):

break

else:

break

cap.release()

cv2.destroyAllWindows()

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--weights', nargs='+', type=str, default='runs/train/exp9/weights/best.pt',

help='model.pt path(s)')

#parser.add_argument('--source', type=str,default='',help='source') # file/folder, 0 for webcam

parser.add_argument('--img-size', type=int, default=640, help='inference size (pixels)')

parser.add_argument('--conf-thres', type=float, default=0.45, help='object confidence threshold')

parser.add_argument('--iou-thres', type=float, default=0.5, help='IOU threshold for NMS')

parser.add_argument('--device', default='cpu', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--view-img', action='store_true', help='display results')

parser.add_argument('--save-txt', action='store_true', help='save results to *.txt')

parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels')

parser.add_argument('--nosave', action='store_true', help='do not save images/videos')

parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --class 0, or --class 0 2 3')

parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS')

parser.add_argument('--augment', action='store_true', help='augmented inference')

parser.add_argument('--update', action='store_true', help='update all models')

parser.add_argument('--project', default='runs/detect', help='save results to project/name')

parser.add_argument('--name', default='exp', help='save results to project/name')

parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')

opt = parser.parse_args()

print(opt)

check_requirements(exclude=('pycocotools', 'thop'))

# 初始化模型

Detect = DETECT_API(opt)

print('初始化完成/n')

# 界面设计

detect_state = False

top = tk.Tk()

top.title('YOLOV5-Lite Detect')

top['bg'] = 'white'

width = 300

height = 150

win_width = top.winfo_screenwidth()

win_height = top.winfo_screenheight()

center_place = str(int(win_width/2 - width/2))+'+'+str(int(win_height/2 - height/2))

top.geometry(str(width)+'x'+str(height)+'+'+center_place)

label = tk.Label(top,text='path')

label.pack(fill='both')

btn_img = tk.Button(top,text='选择图片',command=select_img)

btn_img.pack(fill='both')

btn_video = tk.Button(top,text='选择视频',command=select_video)

btn_video.pack(fill='both')

btn_detect = tk.Button(top,text='DETECT',command=mt_detect)

btn_detect.pack(fill='both')

top.mainloop()

如何运行

首先,你需要配置yolov5-Lite算法的运行环境,使能够正确的训练模型。配置过程与yolov5-7.0一致,如果报错,检测对应的库的版本是否符合条件,一般不需要最新的库,库的版本不要太高。训练好模型权重之后,parser.add_argument('--weights', nargs='+', type=str, default='runs/train/exp9/weights/best.pt', help='model.pt path(s)')修改成自己的权重路径即可。

一些截图

红色警告是torch版本问题,可以忽略,暂时没发现有什么影响。

最后

先到这吧,有问题可评论。

上一篇:Opencv图像处理(全)